Language?

We exist in the collective story of human unconsious

Language, differing cultures and differing spheres of sub-collective unconscious. Given the notion of culture as a pseudo-aligned mesa-optimizer, and culture is ontologically prior to language, we’d assume that this tool for compressing reality has also been corrupt given it’s basis in culture.

I often ponder the teleological significance of language; the argmax over the set of all grammatical functions that maximize its utility function. Language, in its most primitive form, can be thought of as a set of strings. Strings in this sense can be thought of as a sequence of symbols from a primitive set of alphabets. The set of alphabets that once modeled in a sequential manner, following the rules of said grammar, makes up the language. Language, from my perspective, seems to be a tool “created” by humans in an attempt to compress the higher dimensional abstract realm of objective reality into subjective qualia, allowing us to transfer information from one’s perspective onto another. One could think of the brain as a sort of Turing machine-based automata. This emergence of language as a quasi-compression tool is quite pivotal.

It is often acknowledged that there was a significant alpha in having efficient means of transferring information in the form of intelligible sounds from one person to another. It was a pivotal advantage that allowed pre-historic Homo sapiens to seemingly eradicate all other hominin species we encountered, due to the fact that we were able to organize to such a high degree given the emergence of language, albeit in its most primitive form.

If one were to take an information theory perspective, we could start with four simple questions. How many bits of information are encoded by the transmitter over the given medium given said method? how lossily compressed is said form of communication? what is the theorized maximal bitrate, or Channel capacity of said medium of information propagation? and is the entropic load minimized relative to other methods?

To ground these in more concrete classes, we could apply this set of questions to three classes of information propagation mechanisms.

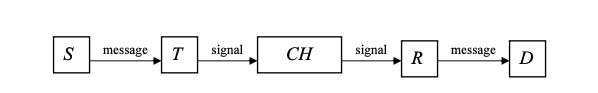

First, and most pre-historic, as noted earlier, is unintelligible sounds and gestures as a mechanism for information propagation. Let us define an architecture for a generic information propagation system as denoted by Shannon.

- Let the source S, be the set containing the list of states that one can conceive as letters of a primitive alphabet.

- Let the encoder E, be the encoding function that transforms the written or spoken message into bits of information to be transmitted.

- Let the channel CH, be the medium by which said encoded signal passes.

- Let receiver R, be the decoding function that decompresses the encoded signal to be received by the destination.

- Let the destination D, be the entity receiving said message.

Figure 1. A primitive information propagation system

We define

The amount of information produced at the source by the occurence of state :

Eq 1. Bits of information encoded at the Sender side is given by the negative logarithm of the probability distribution of the set of states

Also, given that we know S produces a sequence of messages, we could define the entropy of said source as

:

Eq 2. Entropy of sender is given by the negative product of the summation of the product of the probability distribution over the set of states by the logarithm of the probability distribution over the set of states

The same functions for bits of information encoded and entropy at source are also applied to the destination to attain those values:

Eq 3. Bits of information encoded at the destination side is given by the negative logarithm of the probability distribution of the set of possible destination states

Also, the entropy at the destination can be attained by

:

Eq 4. Entropy of destination is given by the negative product of the summation of the product of the probability distribution over the set of possible destination states by the logarithm of the probability distribution over the set of destination states

Given that we now have H(S) and H(D), we can attain the Shannon mutual information by the intersection of states over these two sets based on equivocation and noise.

We had established earlier that a sufficiently good metric for gauging the efficiency of information encoding and decoding mechanisms would be to measure the entropic difference at the source as well as the destination. Given such a basis, we can surmise that inscription-based - or textual information propagation systems have proven to be the more efficient means of information propagation. More so than any other, unless one were to add a parameter for technological advancement goading to ever more efficient information propagation mechanisms

Now, we can ask the question; given an information propagation system so primitive as hand signals and body movements as well as unintelligible sounds; how efficient would one assume such a mechanism would be? how many bits of information are lost and how much noise is inflicted on such a system. Given formal models of information propagation as has been laid out by Shannon, we could actually run some mini experiments to arrive at a tangible solution, but I don’t really have sufficient time to do that.

Prior to the emergence of any such inscription-based or symbolic communication mechanism, how could one even go about measuring the amount of information encoded at either the source or destination end of the pipeline given that there’s no standardized glossary that could give us any ideas about how such information would be encoded. Let’s, for some time, imagine the brain, specifically the visual cortex as the decoder in such a process. The main failure point of such an architecture is the amount of useful information that would be ultimately lost due to large amounts of noise obstruction. We would ideally desire a more efficient reality compression tool that would give way for a more entropy preserving and noise minimizing form of information propagation.

Symbolic methods of information propagation can be thought of as one of the most pivotal paradigm shifts in the history of our species. It seems as though a necessary precursor to higher-order thought and cognition entails an efficient mechanism of information propagation. Such an efficient mechanism as symbolic methods enabled humans to exercise higher-order symbolic reasoning spurring ostensibly interpolative - intricately structured knowledge graphs with optimal embedding methods in the biological tensors that project unto the high dimensional representation space (likely a quasi kernel Hilbert space) we attempt to extrapolate meaning from. Symbolic methods as denoted by art (drawings, cave carvings, etc), primitive number systems, and primitive alphabet systems allowed humans generate ontologies to structure their reality, giving rise to intricate epistemologies. This new ability to effectively structure ontologies and epistemologies would prove to be a more salient bug of our technological progress for it would eventually bring forth the inception of culture, and as I had noted in an earlier post, it’s not obvious that such an emergence was a net positive for the world.

The emergence of math produced an even greater Khunian divergence. More so than the emergence of any other sort of symbolic representation method. Math entailed a betrayal of intuition and priors and required significantly higher levels of abstraction, ultimately bestowing unto us the ability to gain an objective unintuitive understanding of the more salient - abstract spaces that make up the fabric of our universe. Math generally allowed us to ground objectively physical phenomena, mainly high dimensional geometric objects and unintuitive topologies, in formal models, ostensibly granting us the “manual to the universe”. Given the sheer force of higher-order symbolic reasoning and generalizable interpolation required to provide a proof for a sufficiently advanced conjecture, or to plot and model the trajectories of large cosmic bodies across space is quite a lot and would generally require a large amount of computational power given the higher dimensional embedding tensor and even higher-dimensional manifold spaces required for iterative extrapolation of useful signals.

To be more succinct, language, as an analog to KPCA, would entail transforming any such n-dimensional data points ( particularly the more salient features), then mapping said data points in a non-linear manner onto a much larger, higher-dimensional representation space (which can also be conceived as a kernel Hilbert space), such a space can be conceived as analogous, in this context, to the human collective unconscious as denoted by Carl Jung, goading to the more salient aspects of idealism; namely that, “The substantive reality around us is only a reflection of a higher truth. The truth, Plato argued, is the abstraction”.

This is a really interesting topic and has had a lot of work done on it in the realm of linguistic and computational linguistics. I’d have liked to have gone a lot deeper into this topic from an information-theoretic pov, but I’m constrained both by time, and general levels of interest. Ideally, this post should be considered for what it is, a fun schizoramble about the limits of human qualitative experience bounded by biology and predicated very importantly on the emergence of entropic load preserving methods of communication, beckoning a Jungian collective unconscious projected and preserved in a high dimensional manifold of symbolic intricacies.